Interference: Dead Air available now!

We know, we know; y’all aren’t here for a lengthy blog post or heartfelt reflections on the road we traveled to arrive at this moment.

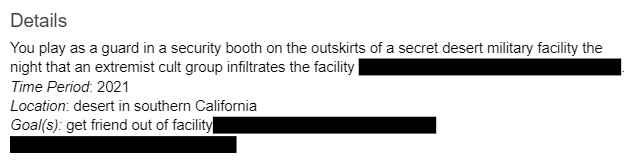

Because Interference: Dead Air is now available on Steam!

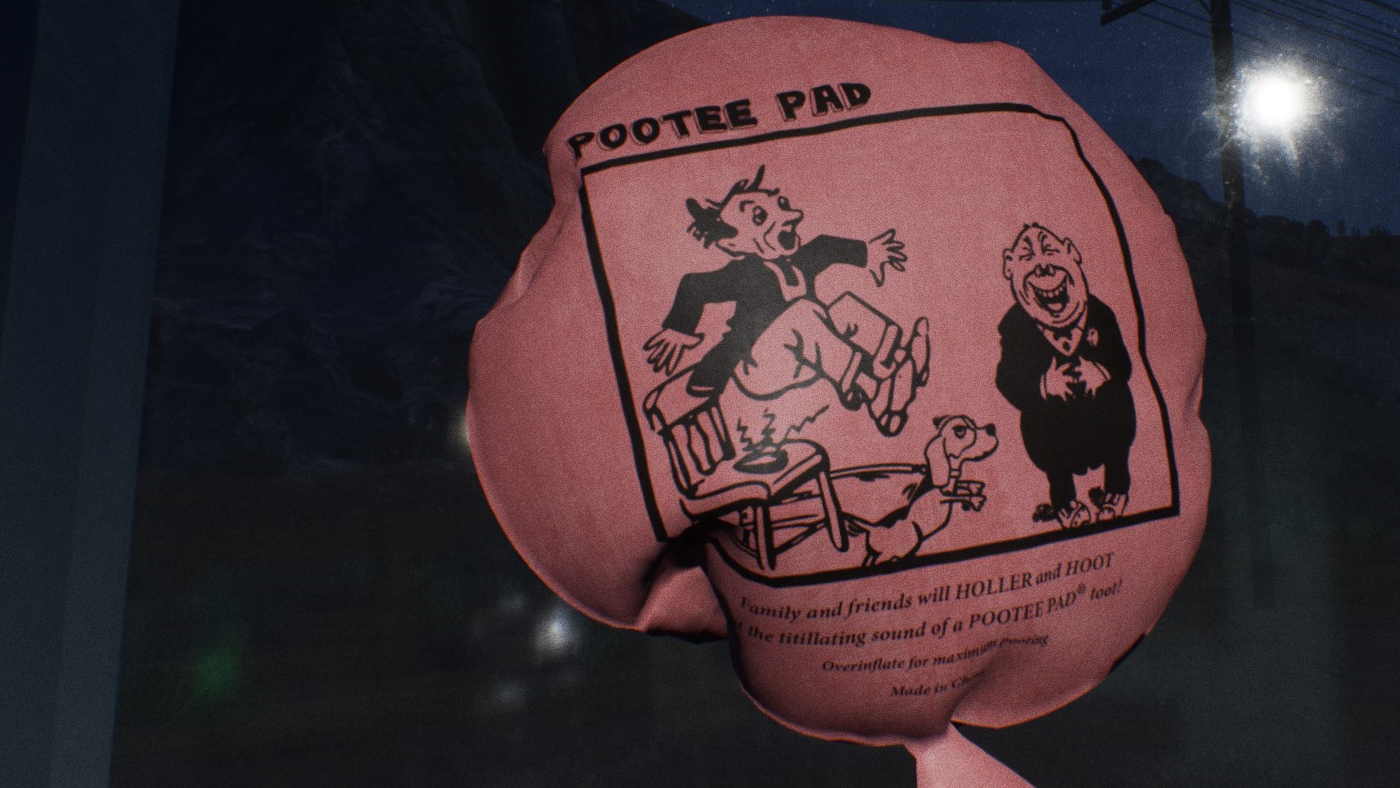

Peep that fresh launch trailer and get hyped, because it’s time — yes, it’s finally time — to interfere! Have a great first day at work.